Tutorial: How to extract content behind logins

Copy your local authorized cookies into Extract or Crawl API. (⏲️ 15 Minutes)

Sometimes you need to log into a site to get to some walled-off data.

There are two approaches you can take:

- Download the HTML and send the data in a POST request to the API directly.

- Use Cookies to simulate an active session and skip the login prompt of a website.

The guide below will demonstrate the latter option.

Let’s assume we want to crawl the articles on TheBrowser.com. TheBrowser is a manually curated subscription based website which recommends interesting links from around the web.

Getting the Login Cookie

Once you have an account on the site you’re wanting to crawl, log into it.

Once logged in, open your browser’s Dev Tools (right click anywhere on the page) and select Console, or go to View -> Developer -> Javascript Console. Then, with the new window that opens, type in

document.cookieThis will return all the cookies you have on this website in one long string. Copy all of it.

We now have the login cookie copied!

Note – this method will often grab more than the login cookie, as all of the site’s cookies are there, from traffic analysis to ads. It’s better to err on the side of caution and grab too much than too little.

Next, we'll show you how to deploy this cookie in 3 different ways — in a Crawl, in Custom Extract API calls, or temporarily in an Extract API call.

One or more of these methods may be applicable for your specific use case.

Method #1: Using the Cookie in Crawls

Your authenticated cookie can also be used for authenticated crawling. Here are 2 options to implement it in your crawl —

In the Dashboard

In your Crawl Settings, paste the cookie into a field called "Cookie" under the "Custom Headers" section.

With Crawl API

Paste the cookie into a custom header called "Cookie" with your API request.

This will be enough to crawl a site with a login for as long as the session lasts.

Note: Be aware that some cookies last longer than others, and the session might expire after a while. You will then need to repeat the process.

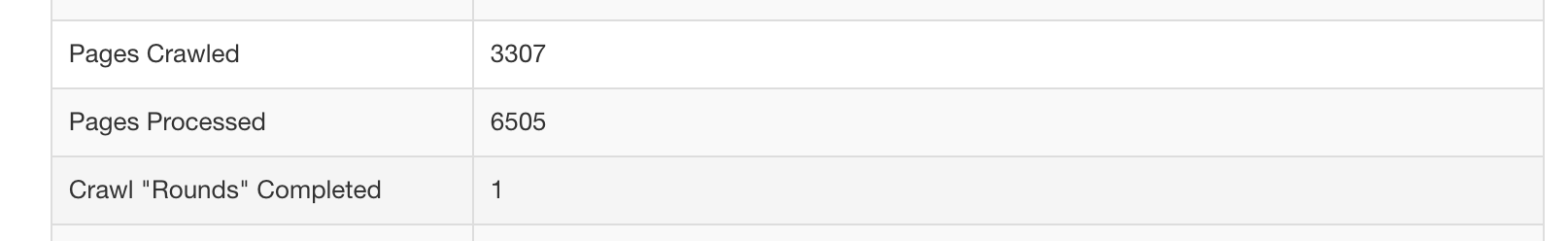

After the job has been running for a while, TheBrowser will have given us a nice set of results.

For a more detailed walkthrough on how to do this, check out this video —

Method #2: Using the Cookie in Extract API Calls

In the Dashboard

- Create a Custom API (either one that extends an Extract API or a fresh Custom API)

- Under "Other Settings", scroll to the field called "Cookie"

- Paste your cookie in

With Extract API

Paste the cookie into a custom header called "Cookie" with your API request. See Custom Headers.

Both methods will permanently store the copy/pasted cookie into your Custom API and enable future Extract calls with that API and corresponding URL pattern to use this cookie for extraction.

Method #3: Temporarily using a cookie with an API

When you want a one-off API call or an API call from the command line using your cookie (for testing purposes, for example), you can pass the header X-Forward-Cookie with the request and Diffbot will forward that cookie along.

Let’s see how that would work with the fantastic API testing tool Postman.

We need to:

- put the API URL in the main field

- open the “params” area and add the token and URL, then select the URL and select “ENCODE” so that it’s properly encoded for sending

- open the headers area and input the cookie

Here’s a demonstration of the whole process:

Updated 6 months ago